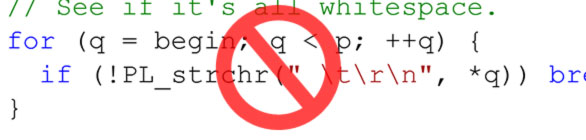

I can see FindChar, Substring, ToInteger and even atoi, strchr, strstr and sscanf craziness all over the Mozilla code base. There are though much better and, more importantly, safer ways to parse even a very simple input.

I wrote a parser class with API derived from lexical analyzers that helps with simple inputs parsing in a very easy way. Just include mozilla/Tokenizer.h and use class mozilla::Tokenizer. It implements a subset of features of a lexical analyzer. Also nicely hides boundary checks of the input buffer from the consumer.

To describe the principal briefly: Tokenizer recognizes tokens like whole words, integers, white spaces and special characters. Consumer never works directly with the string or its characters but only with pre-parsed parts (identified tokens) returned by this class.

There are two main methods of Tokenizer:

bool Next(Token& result);

If there is anything to read from the input at the current internal read position, including the EOF, returns true and result is filled with a token type and an appropriate value easily accessible via a simple variant-like API. The internal read cursor is shifted to the start of the next token in the input before this method returns.

bool Check(const Token& tokenToTest);

If a token at the current internal read position is equal (by the type and the value) to what has been passed in the tokenToTest argument, true is returned and the internal read cursor is shifted to the next token. Otherwise (token is different than expected) false is returned and the read cursor is left unaffected.

Few usage examples:

Construction

#include "mozilla/Tokenizer.h"

mozilla::Tokenizer p(NS_LITERAL_CSTRING("Sample string 2015."));

Reading a single token, examining it

mozilla::Tokenizer::Token t;

bool read = p.Next(t);

// read == true, we have read something and t has been filled

// Following our example string...

if (t.Type() == mozilla::Tokenizer::TOKEN_WORD) {

t.AsString(); // returns "Sample"

}

Checking on a token value and automatically skipping on a positive test

if (!p.CheckWhite()) {

throw "I expect a space here!";

}

read = p.Next(t);

// read == true

t.Type() == mozilla::Tokenizer::TOKEN_WORD;

t.AsString() == "string";

if (!p.CheckWhite()) {

throw "A white space is expected here!";

}

Reading numbers

read = p.Next(t); // read == true t.Type() == mozilla::Tokenizer::TOKEN_INTEGER; t.AsInteger() == 2015;

Reaching the end of the input

read = p.Next(t); // read == true t.Type() == mozilla::Tokenizer::TOKEN_CHAR; t.AsChar() == '.'; read = p.Next(t); // read == true t.Type() == mozilla::Tokenizer::TOKEN_EOF; read = p.Next(t); // read == false, we are behind the EOF // t is here undefined!

More features

To learn more enhanced features of the Tokenizer - there is not that many, don't be scared ;) - look at the well documented Tokenizer.h file under xpcom/ds.

As a teaser you can go through this more enhanced example or check on a gtest for Tokenizer:

#include "mozilla/Tokenizer.h"

using namespace mozilla;

{

// A simple list of key:value pairs delimited by commas

nsCString input("message:parse me,result:100");

// Initialize the parser with an input string

Tokenizer p(input);

// A helper var keeping type and value of the token just read

Tokenizer::Token t;

// Loop over all tokens in the input

while (p.Next(t)) {

if (t.Type() == Tokenizer::TOKEN_WORD) {

// A 'key' name found

if (!p.CheckChar(':')) {

// Must be followed by a colon

return; // unexpected character

}

// Note that here the input read position is just after the colon

// Now switch by the key string

if (t.AsString() == "message") {

// Start grabbing the value

p.Record();

// Loop until EOF or comma

while (p.Next(t) && !t.Equals(Tokenizer::Token::Char(',')))

;

// Claim the result

nsAutoCString value;

p.Claim(value);

MOZ_ASSERT(value == "parse me");

// We must revert the comma so that the code bellow recognizes the flow correctly

p.Rollback();

} else if (t.AsString() == "result") {

if (!p.Next(t) || t.Type() != Tokenizer::TOKEN_INTEGER) {

return; // expected a value and that value must be a number

}

// Get the value, here you know it's a valid number

uint32_t number = t.AsInteger();

MOZ_ASSERT(number == 100);

} else {

// Here t.AsString() is any key but 'message' or 'result', ready to be handled

}

// On comma we loop again

if (p.CheckChar(',')) {

// Note that now the read position is after the comma

continue;

}

// No comma? Then only EOF is allowed

if (p.CheckEOF()) {

// Cleanly parsed the string

break;

}

}

return; // The input is not properly formatted

}

}

Currently works only with ASCII inputs but can be easily enhanced to also support any UTF-8/16 coding or even specific code pages if needed.

Nice idea! It doesn't look like you've converted any existing code to use the new class, though? That's a shame... it's a pet peeve of mine that we often introduce a new, better way of doing something, but rarely bother to change existing code to use it. Any plans to do that?

Thanks! I definitely have plans. There are places under netwerk/ that are worth converting to use Tokenizer, but it's definitely not limited to this module. I'll progressively pick existing places and convert.

Now as the Tokenizer is landed I can at least require its use in reviews.